The curious and observant may have noticed the appearance of a new service in OneFS 9.8, namely isi_iceage_d.

For example:

# isi services -a | grep -i iceage

isi_iceage_d Ice Age Monitor Daemon Enabled

So what exactly is this new IceAge process and what does it do, you may ask?

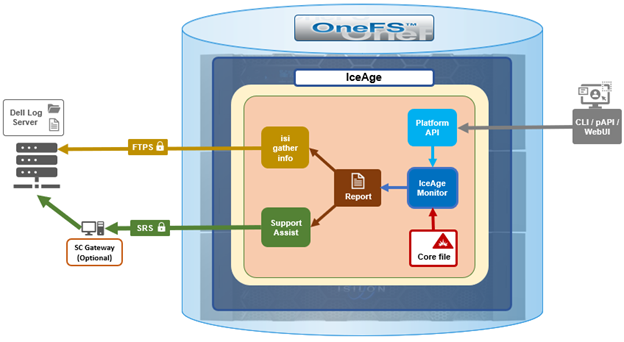

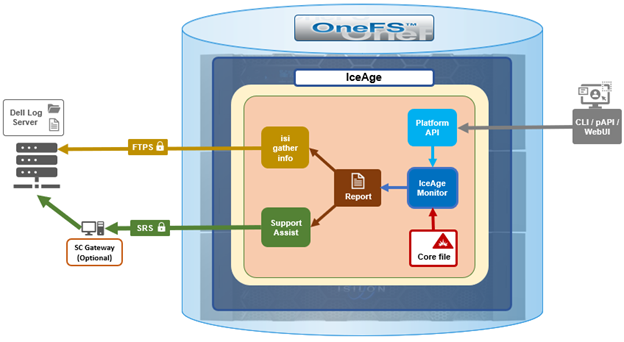

Well, OneFS IceAge is a python tool based on lldb, which automatically extracts, optimizes, compresses, and disseminates information from OneFS core files. The goal of this is to streamline the detection and diagnosis of issues and bugs and improve time to resolution.

The IceAge service (IceAge monitor) performs the following core functions:

| Function |

Description |

| Detection |

Monitoring the /var/crash directory for fresh core files. |

| Extraction |

Extraction (and subsequent removal) of IceAge reports and headers from cores. |

| Upload |

Uploading reports to Dell Backend Services . |

The IceAge service runs on a cluster, immediately extracting IceAge reports from any core dumps as they are generated, and outputting to a JSON report file, which is suitable for further processing. Reports also include a stack trace to show the potential crash cause. Information can be extracted without the presence of debug symbols and can also be retroactively annotated with further useful information (such source code line numbers, etc) once symbols are available. Additional information can also be extracted from debug symbols in order to help debug application-specific data structures from a core.

Once a core has been detected, optimized, and processed, IceAge then uses two principal methods of transmission for the report and header:

| Uploader |

Description |

| isi_gather_info |

In addition to OneFS logsets, the isi_gather_info utility in OneFS 9,8 and later can collect and transmit JSON IceAge reports and headers as a default option and retain sending cores by request from command line options. |

| SupportAssist |

Secure Remote Services (SRS) is used for sending alerts, log_gathers, usage intelligence, managed device status to the backend. OneFS uses SRS to communicate with Dell Support’s backend systems. OneFS 9.8 introduces the ability to collect and send JSON IceAge reports and retain sending cores by request from specific command. |

The isi_gather_info command on the cluster gathers various files, including dumps and the output of various commands and uploads them to Dell Support. The /usr/bin/remotesupport directory contains a set of gather and remote support scripts which are designed to collate specific log information about the cluster. Under this directory is the ‘get_data_iceage’ script which, in conjunction with ‘GetData.sh’, gather and upload data about IceAge reports and headers. These scripts are typically called from the Remote Support Shell, which is a simple, limited shell, solely for running these support scripts.

To aid identification, the header files are generated with the following nomenclature:

YYYYMMDD_HHMMSS_$(SWID)_$(RANDOM_GUID)_IceAgeHeader.tgz

For example:

20240712_173427_ELMISL0121YLVD_4793e5ec-3605-41a6-b72c-d3c404059988_IceAgeHeader.tgz

The header also includes backtrace information and several important sections from the IceAge JSON report.

When IceAge headers have been created and written out to a temporary file, the temporary file is renamed to match the ESRS backend requirements and is uploaded to Dell (ie. CloudIQ). If the upload succeeds the file is removed. However, if the upload fails for any reason, the file is placed into a ‘retry’ state, and a subsequent upload attempted at the beginning of the next interval. Upload retry files are stored in the ‘/ifs/.ifsvar/iceage-reports/headers/retries’ directory.

Architecturally, IceAge looks and operates as follows:

The core isi_iceage_d daemon spawns several additional process, which run on each node in the cluster. These include:

- IceAge monitor upload

- Cluster queue watcher

- Local core watcher

- Local core timer

For example:

# ps -auxw | grep -i iceage

root 4668 0.0 0.0 99976 50480 - S Sat12 1:34.52 /usr/libexec/isilon/isi_iceage_d /usr/local/lib/python3.8/site

root 4688 0.0 0.0 126200 51996 - I Sat12 0:06.87 iceage_monitor_upload (isi_iceage_d)

root 63440 0.0 0.0 99976 50480 - S 18:33 0:00.00 iceage_monitor: cluster queue watcher (isi_iceage_d)

root 63459 0.0 0.0 102384 50656 - S 18:33 0:00.00 iceage_monitor: local core watcher (isi_iceage_d)

root 63462 0.0 0.0 99976 50480 - S 18:33 0:00.00 iceage_monitor: local core timer (isi_iceage_d)

When a OneFS component or service fails and a core file is written to /var/crash, IceAge enters it into a queue under /ifs/.ifsvar/iceage-cores/, in which cores awaiting processing are held. To facilitate this, OneFS creates a temporary crash space on the cluster’s existing drives and provisions an ephemeral UFS file system for IceAge to use. IceAge plug-ins are also provided for several OneFS protocols and data services, such as NFS, SMB, etc, in order to generate more detailed reports from the often large and complex cores derived from issues with these processes.

Additionally, the IceAge cluster monitor service watches for cores in the queue and processes them one by one. This generates a report with a summary of information from the core. These reports can then be transmitted to Dell Support by the isi_gather_info process, or via SupportAssist (ESE).

Enabled by default in OneFS 9.8 and later, the IceAge service is managed by MCP, and can be enabled and disabled via the ‘isi services’ CLI command.

# isi services -a isi_iceage_d

isi: Service 'isi_iceage_d' is enabled.

# isi services -a isi_iceage_d disable

The service 'isi_iceage_d' has been disabled.

# isi services -a isi_iceage_d enable

The service 'isi_iceage_d' has been enabled.

Integration with SupportAssist/ESE and isi_gather_info allows IceAge to automatically and securely send the generated report text files back.

Configuration-wise, the IceAge monitor uses a gconfig file in which parameters such as log level can be specified. For example:

# isi_gconfig -t iceage_monitor

[root] {version:1}

iceage_monitor.queue_max_size_gb (int) = 20

iceage_monitor.retention_period_min (int) = 43800

iceage_monitor.log_level (char*) = INFO

iceage_monitor.header_dispatch (bool) = true

iceage_monitor.min_core_create_time_supported (int) = 1715245735

The above configuration is also exposed via the OneFS PlatformAPI, and any modifications are recorded in the /ifs/.ifsvar/ iceage_monitor_config_changes.log file.

The basic flow of the IceAge service and SupportAssist transport is as follows:

- First, ensure that SupportAssist is configured and running on the cluster:

# isi supportassist settings view | grep -i enabled

Service enabled: Yes

If not, SupportAssist can be activated as follows:

# isi supportassist settings modify --connection-mode gateway --gateway-host <host_FQDN> --gateway-port 9443 --backup-gateway-host <backup_FQDN> --backup-gateway-port 9443 --network-pools="subnet0.pool0"

Note that the changes made to SupportAssist settings may take some time to take effect.

- Next, generate one or more cores. This can be done with the following CLI syntax:

# isi_noatime isi_kcore <PID> /var/crash/<PID>.<service>.cor.gz

For example, creating two NFS core files for processes with PIDs ‘22120 and ‘22121 in the following output:

# ps -aux | grep nfsroot 22109 0.0 0.5 54840 30356 - Ss 17:21 0:00.01 /usr/sbin/isi_netgroup_d -P isi_netgroup_d_nfsroot 22120 0.0 0.4 55000 26652 - Ss 17:21 0:00.04 /usr/libexec/isilon/nfs proxy nfs /var/run/nfs.pidroot 22121 0.0 0.7 111340 42812 - S< 17:21 0:00.13 lw-container nfs (nfs)root 22175 0.0 0.0 14208 2896 0 S+ 17:21 0:00.00 grep nfs# isi_noatime isi_kcore 22120 /var/crash/22120.nfs.core.gz# isi_noatime isi_kcore 22121 /var/crash/22121.nfs.core.gz# ls -ltr /var/crash | grep -i core-rw------- 1 root daemon 716005 Jul 9 17:22 22120.nfs.core.gz-rw------- 1 root daemon 1211863 Jul 9 17:22 22121.nfs.core.gz

- Next, the monitor log shows the location of the report file for each cores:

# cat /var/log/isi_iceage_monitor.log

For example:

# cat /var/log/isi_iceage_monitor.log

tme2: 2024-07-09T17:23:30.541904+00:00 <3.6> tme-2(id2) isi_iceage_d[4327]: INFO:cluster.py:176 -- Run ClusterProcess with cores: ['/ifs/.ifsvar/iceage-cores/tme-1-1707499378.08631-22121.nfs.core.gz']tme2: INFO:__main__.py:569 -- IceAge startedtme2: INFO:__main__.py:320 -- Detected information for /ifs/.ifsvar/iceage-cores/tme-1-1707499378.08631-22121.nfs.core.gz:tme-2: INFO:__main__.py:360 -- build : b.main.4102rtme-2: INFO:__main__.py:360 -- domain : usertme-2: INFO:__main__.py:360 -- executable : /usr/likewise/sbin/lwsmdtme-2: INFO:__main__.py:360 -- handler : lldbtme-2: INFO:__main__.py:232 -- Calculating space needed...tme-2: INFO:__main__.py:250 -- 379992064 bytes.tme-2: INFO:__main__.py:254 -- Setting up scratch space...tme-2: INFO:__main__.py:259 -- Ready.tme-2: INFO:__main__.py:385 -- Set vmem limit to 2147483648 for pid 15640tme-2: INFO:__main__.py:389 -- Loading core...tme-2: INFO:__main__.py:391 -- Core /ifs/.ifsvar/iceage-cores/tme-1-1707499378.08631-22121.nfs.core.gz loaded.tme-2: INFO:__main__.py:394 -- Extracting...<snip>isi_iceage_d[15637]: INFO:makedigest.py:124 -- Written tgz file: '/ifs/.ifsvar/iceage-reports/headers/20240209_172334_DEFAULTSWID_db3bb260-88ce-4619-9f48-b9828eddccd5_IceAgeHeader.tgz'tme-2: 2024-07-09T17:23:34.318304+00:00 <3.6> tme-2(id2) isi_iceage_d[15637]: INFO:makedigest.py:124 -- Written tgz file: '/ifs/.ifsvar/iceage-reports/20240709_172334_DEFAULTSWID_db3bb260-88ce-4619-9f48-b9828eddccd5_IceAgeHeader.tgz'

- The IceAge JSON files are located under /ifs/.ifsvar/iceage-cores, and contain a wealth of information, including OneFS versions and paths, etc. For example:

# cat tme-2-1720811519.5973-59660.nfs.core.json | grep -i core

"core-file": "/ifs/.ifsvar/iceage-cores/tme-2-1720811519.5973-59660.nfs.core.gz",

"set_core_hook": 18446744071587293992,

"corefile_build": "B_9_8_0_0_003(RELEASE)",

"corefile_version": "Isilon OneFS 9.8.0.0 (Release, Build B_9_8_0_0_003(RELEASE), 2024-03-11 09:27:38, 0x909005000000003)",

- Finally, if SupportAssist is configured on the cluster, the ESE logs can be used verify that the reports have been successfully transmitted back to Dell Support with the following CLI command:

# cat /usr/local/ese/var/log/ESE.log | grep -I iceage

For example:

"path": "/ifs/.ifsvar/iceage-reports/headers/20240709_172303_ELMISL0224SM54_0740a853-517c-4fc5-b162-64991d9494b9_IceAgeHeader.tgz",

20067 2024-07-09 17:26:41,235 CP Server Thread-7 INFO DellESE.ese.threads.web.cherrypydata LN: 61 /ifs/.ifsvar/iceage-reports/headers/20240709_172303_ELMISL0224SM54_0740a853-517c-4fc5-b162-64991d9494b9_IceAgeHeader.tgz is a file

20067 2024-07-09 17:26:43,696 Web Dispatcher DEBUG urllib3.connectionpool LN: 474 https://eng-sea-v4scg-01.west.isilon.com:9443 "PUT /esrs/v1/devices/ISILON-GW/ELMISL0224SM54/mft/BINARY-ELMISL0224SM54-20240709T172642Z-33MJ9WiT5Swt4mcLdEwSkMA-20240709_172303_ELMISL0224SM54_0740a853-517c-4fc5-b162-64991d9494b9_IceAgeHeader.tgz HTTP/1.1" 200 0

20067 2024-07-09 17:26:43,699 Web Dispatcher DEBUG DellESE.ese.srs.srswebapi LN: 89 Sending ESE binary file [20240709_172303_ELMISL0224SM54_0740a853-517c-4fc5-b162-64991d9494b9_IceAgeHeader.tgz], Workitem [33MJ9WiT5Swt4mcLdEwSkMA], sent to url https://eng-sea-v4scg-01.west.isilon.com:9443/esrs/v1/devices/ISILON-GW/ELMISL0224SM54/mft/BINARY-ELMISL0224SM54-20240709T172642Z-33MJ9WiT5Swt4mcLdEwSkMA-20240209_172303_ELMISL0224SM54_0740a853-517c-4fc5-b162-64991d9494b9_IceAgeHeader.tgz. Date: 2024-02-09T17:26:43.282+0000. Status: 200

"path": "/ifs/.ifsvar/iceage-reports/headers/20240209_172334_ELMISL0224SM54_db3bb260-88ce-4619-9f48-b9828eddccd5_IceAgeHeader.tgz",

20067 2024-07-09 17:26:47,235 CP Server Thread-8 INFO DellESE.ese.threads.web.cherrypydata LN: 61 /ifs/.ifsvar/iceage-reports/headers/20240709_*172334_ELMISL0224SM54_db3bb260-88ce-4619-9f48-b9828eddccd5_IceAgeHeader.tgz* is a file

20067 2024-07-09 17:26:58,632 Web Dispatcher DEBUG urllib3.connectionpool LN: 474 https://eng-sea-v4scg-01.west.isilon.com:9443 "PUT /esrs/v1/devices/ISILON-GW/ELMISL0224SM54/mft/BINARY-ELMISL0224SM54-20240709T172658Z-3hJcHU9hEomZYyWLCkqh5Jj-20240709_172334_ELMISL0224SM54_db3bb260-88ce-4619-9f48-b9828eddccd5_IceAgeHeader.tgz HTTP/1.1" 200 0

20067 2024-07-09 17:26:58,636 Web Dispatcher DEBUG DellESE.ese.srs.srswebapi LN: 89 Sending ESE binary file [20240709_172334_ELMISL0224SM54_db3bb260-88ce-4619-9f48-b9828eddccd5_IceAgeHeader.tgz], Workitem [3hJcHU9hEomZYyWLCkqh5Jj], sent to url https://eng-sea-v4scg-01.west.isilon.com:9443/esrs/v1/devices/ISILON-GW/ELMISL0224SM54/mft/BINARY-ELMISL0224SM54-20240709T172658Z-3hJcHU9hEomZYyWLCkqh5Jj-20240709_172334_ELMISL0224SM54_db3bb260-88ce-4619-9f48-b9828eddccd5_IceAgeHeader.tgz. Date: 2024-07-09T17:26:58.362+0000. Status: 200

There are some caveats to be aware of with IceAge, and it may not be able to process every core in all situations. As such, it is considered ‘best effort’ relative to security and performance constraints.

Specifically, the scenarios under which IceAge monitor will not automatically process cores include:

| Component |

Condition Details |

| Filesystem |

During unavailability of ifs |

| On-disk encryption |

On SED Nodes, because IceAge uses the band on SEDs that is not encrypted for scratch. |

| Drive maintenance |

During drive distmirror rebalancing and drive firmware upgrade |

| Capacity |

If OneFS is unable to find sufficient free space on drives. |

| Memory |

If it would require too much memory that could cause instability. The vmem limit is determined by the amount of scratch space needed as well as system memory. |

| Version |

For any cores generated on OneFS versions older than the running build, IceAge may struggle to interpret them accurately using the debug symbols from the current build. |